The inventory gave me a dependency graph. Now I needed to order it into an architecture.

Password manager first. Everything depends on credentials. If I can't log into services, nothing else moves.

Then email, because everything depends on a stable address. Every account migration involves updating the registered email. Then authentication: 2FA codes, migrated service by service as each account is touched. Then everything else in descending order of criticality. The infrastructure to support all of this has to exist before any of it begins.

I had to pause at that last question. I couldn't just start installing things. Where does the infrastructure live? How do the pieces connect? What happens when something breaks? These are architecture questions, and every answer constrains the answers that follow.

I didn't want to skip this step. If I installed software first and let the architecture emerge from ad-hoc decisions, I wouldn't end up with something sustainable.

Where things run

The first question was the most consequential: does everything run at home, everything on a rented server, or some mix?

Running everything at home is the purest expression of autonomy. Your hardware, your network, your data. But it centralises everything behind a single residential internet connection. When the ISP has an outage, and European residential ISPs have outages, everything goes dark. Email doesn't arrive. Passwords aren't accessible. Calendar entries vanish until the connection returns.

Running everything on a VPS avoids this, but at the cost of the thing you're supposedly pursuing. Your data lives on someone else's disk, in someone else's data centre, subject to someone else's terms of service. You've replaced one landlord with another.

I went with a hybrid model. Services that cannot tolerate downtime run on a VPS with professional uptime. Everything else runs at home, on hardware I own, with data that never leaves my premises unless encrypted. The split criterion was simple: how bad is it if this service is unreachable for a few hours?

Email and passwords are non-negotiable. If I can't receive email or access credentials, I'm locked out of everything. These run on a VPS. File sync, photo storage, home automation, media: these can survive an afternoon without internet. These run at home.

This isn't a novel architecture. It's what most organisations converge on, and the reasoning is identical: critical services get professional infrastructure, everything else gets cost-optimised. The difference is that here, "cost-optimised" also means "sovereignmaxxing."

Once I'd committed to a VPS for critical services, I had to think about isolation. I had an existing VPS that already ran some gaming-related services, serving untrusted connections. Putting personal email on the same machine creates a shared point of failure that doesn't need to exist. A second VPS costs a few euros per month and buys separation: a compromised public-facing service doesn't give an attacker proximity to my credentials.

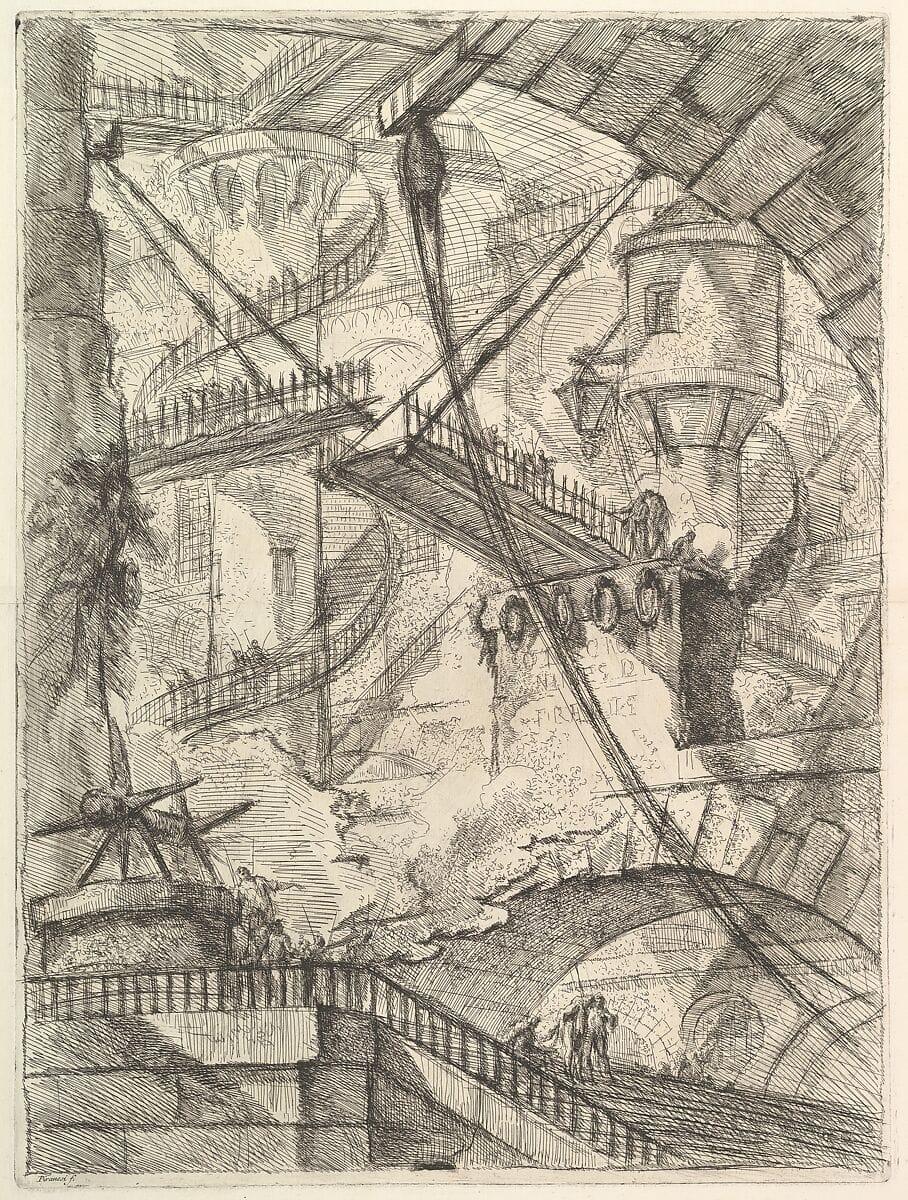

The home server runs a hypervisor instead of running services directly on the host OS. A hypervisor is easiest to imagine as a strict bouncer at a big event space with many rooms: each event gets its own room, and a bouncer ensures the guests never mix.

This lets me run a stable production VM alongside an experimental one where I can try different approaches without risking running services. One of my values was iteration over perfection. Infrastructure should accommodate learning, not just operation. A stack that can't tolerate experimentation will eventually stagnate.

How things connect

Home services need to be reachable from the internet, but exposing a residential IP address is an unnecessary disclosure. The solution is creating a "tunnel" through the interwebs: the home server connects outward to the VPS, and the VPS channels incoming traffic through that tunnel back to the home server.

From the outside, every service appears to be running on the VPS. The home IP is never visible. No ports are opened on the home router. The residential network remains invisible.

I chose WireGuard for the tunnel. It's fast, minimal, well-audited, and a single configuration file per endpoint. This is a common pattern: expose services through a controlled gateway rather than opening your internal network directly. At personal scale, it's simpler to implement and solves several problems simultaneously: no dynamic DNS needed, no port forwarding configuration, no exposure of the home network to people that had no business in my home.

Every service runs in Docker containers. That's another technology that's conceptually similar to a hypervisor, but with a fundamental difference. Docker is looser. Containers share the same underlying system but remain isolated enough to avoid interfering with each other. If hypervisor is the event space bouncer, making sure every party is in its assigned room. Docker is more of a skilled event space manager, letting the parties run all over the event space, skillfully letting them use all the amenities without fighting over resources

Nearly every FOSS project ships a docker-compose.yml, essentially a recipe that tells the event manager how to set up the space for all different types of parties (the metaphor is working hard at this point). That ubiquity and ease of deployment makes Compose the de facto deployment standard for self-hosting. There are alternatives, but each have trade-offs, I won't get into it here, but this hybrid docker/hypervisor set up felt right for me.

The email problem

Email is the most important service to migrate and the most dangerous to self-host. The danger isn't technical. Setting up a mail server is straightforward. The danger is deliverability.

Email delivery depends on reputation. IP reputation, domain reputation, the presence and correct setup of certain internet protocols (namely SPF, DKIM, and DMARC records), and making sure your sending IP doesn't end up on a blocklist maintained by organisations with opaque inclusion criteria. A VPS IP address in a shared hosting range is highly likely to be on a blocklist because of someone else's actions. Your carefully composed email to a bank, a government office, or a potential employer may land in a spam folder, and the receiving provider will never tell you.

If I were truly sovereignmaxxing here, I would run everything myself and fight the deliverability battle. It would be principled and admirable, and future generations will compose songs about me with their AI brainchips. It's also a poor risk calculation for email that's really critical.

I chose a hybrid approach: a self-hosted mail server for storage and access, paired with an external relay for the actual sending and receiving. The relay handles IP reputation, blocklist management, and spam filtering. My server handles IMAP, search, and storage. Mail lives on my VPS, under my control. But it enters and leaves through infrastructure maintained by people whose full-time job is email deliverability.

This is a pragmatic compromise. Sovereignty is about informed, intentional choices, not about maximising personal suffering. I chose which component to delegate and why. That's different from defaulting to Gmail because the alternative seems hard.

The European digital sovereignty debate often treats self-reliance and interdependence as opposites. They're not. Strategic autonomy means choosing your dependencies rather than inheriting them. Running your own mail storage while delegating delivery to a trusted provider is the personal equivalent of manufacturing your own chips while importing the lithography machines. You control what matters most and manage the rest through agreements you can exit.

What I decided, what I deferred

Backup architecture follows the standard 3-2-1 model: three copies, two different media, one offsite. Both servers back up to dedicated cloud storage with encrypted, deduplicated snapshots. Configuration files live in version control. The backup tool was chosen partly for interoperability: it produces output compatible with a more established alternative, meaning I can switch implementations without re-creating my backup history. Being locked into a single backup tool is exactly the kind of dependency this project exists to avoid.

Some decisions were explicitly deferred. The home server hardware I needed to save up for, thanks to the NAND chip crisis making hard drives more precious than shiny pokemon. My best bet was to start with a virtual machine on my home desktop. I can run a few less critical, not resource heavy services there.

You also have to monitor your infrastructure. No need to worry if you're receiving mail or not if you can look at a dashboard, and experience the relief that only a fully green 100% uptime stat can provide. Now you sleep peacefully knowing that if you're not receiving email, it's someone else's fault.

I didn't know what I wanted for monitoring yet, but knowing where to place it in the architecture was simple: this lives on the VPS, not on the home server. If your services and your monitoring share the same failure domain, an outage can silence both the system and the alarm. Running the monitor on the VPS means a home network outage triggers an alert rather than a silent gap in coverage. In the future this could improve with an external uptime service or a tiny second VPS, but at this stage, if the VPS went completely down, the specifics don't matter much in the grand scheme of things. And if I investigate and it turns out it's just the monitor that was down, that would be a big relief.

I could have decided everything upfront. I could have specced the home server, picked every service, chosen every tool. But premature decisions are as dangerous as missing decisions. Deciding on hardware before understanding the actual resource requirements would optimise for the wrong constraints, even if it was obvious SSD prices were going to continue to go up. The architecture should identify decision points without forcing every decision to be made simultaneously.

Nothing in this architecture is original. A critical services VPS, a home server for everything else, an encrypted tunnel between them, backups to external storage, monitoring from the VPS. Every component is well-understood and widely deployed. The value isn't in novelty. It's in the explicit reasoning behind each choice, and in the values I set at the start doing their job. Security over convenience meant a second VPS for isolation, not consolidation for savings. Freedom over features meant Docker, not a proprietary orchestration platform. Autonomy over dependence meant self-hosted mail storage, even when fully delegated email would have been easier. Research over impulse meant deferring hardware decisions until I understood the requirements.

The next step is building it. One service at a time, starting with the foundation.

This is part of the Autonomous Stack series, documenting my migration from proprietary services to self-hosted infrastructure.

Previously: The Inventory I Should Have Done Years Ago.

Next: First Deployment (stay tuned).